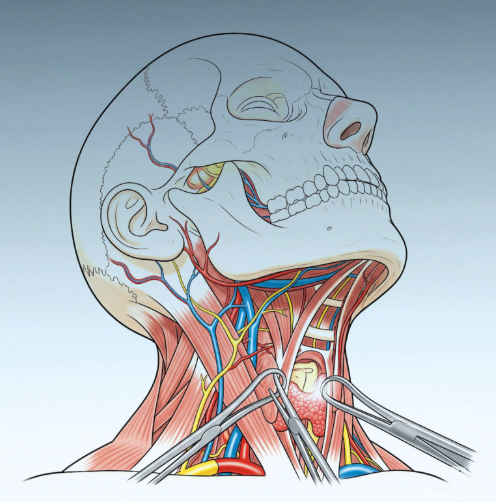

We are preparing to publish a new Manual of Otorhinolaryngology – Head and Neck Surgery (currently in print—coming soon). Our graphic designer recently suggested a cover featuring the image above. Can you spot what’s wrong with it? Unless you have a standard knowledge of neck anatomy, you might not notice—yet there are clear inaccuracies, such as arteries turning into nerves, and vice versa.

This illustration was generated using AI. The generative AI created an anatomy that simply does not exist. While this might be acceptable for an abstract or artistic concept, it is absolutely unsuitable for an academic publication. The designer was pleased with the visual, but this is, in fact, a prime example of the risks inherent to generative AI—especially in fields that depend on scientific accuracy.

A group of academics lead with Dutch universities has just published an Open Letter to “Stop the Uncritical Adoption of AI Technologies in Academia” I take the liberty to transcribe a paragraph posted in the media:

We must protect and cultivate the ecosystem of human knowledge. AI models con mimic the appearance of scholarly work, but they are (by construction) unconcerned with truth – the result is a torrential outpouring of unchecked but convincing-sounding “information”. At best, such output is accidentally true, but generally citationless, divorced from human reasoning and the web of scholarship that it steals from. At worst, it is confidently wrong. Both outcomes are dangerous to the ecosystem.

AI models are (by construction) unconcerned with truth

We are actively discussing these challenges on LinkedIn, exploring both the tremendous opportunities and the real dangers AI presents in Medicine. The scale of the challenge feels overwhelming at times; it’s clear that maintaining rigorous scientific standards means swimming against the current.

J Granell. August 14, 2025